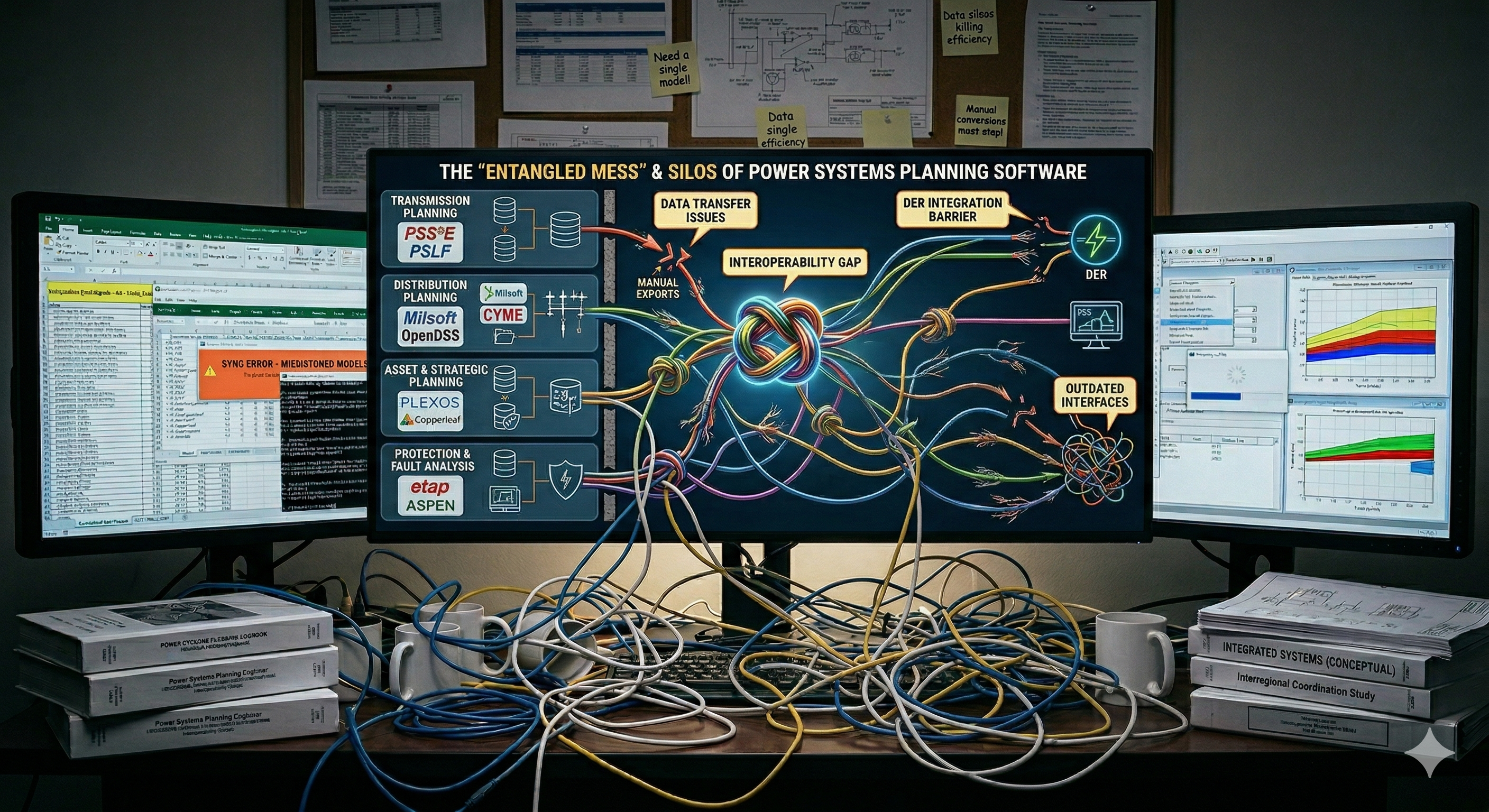

The power industry is in the middle of an enormous planning challenge. Where to site solar farms, how to expand transmission, how to integrate millions of distributed resources, how to connect gigawatts of new datacenter load that appeared in no one’s forecast fifteen years ago — these decisions all interact. But the software tools we use to study them were built to work in isolation. And the mismatch between what integrated planning demands and what today’s tools can deliver is not primarily a computing problem. It’s a software architecture problem and its roots are organizational.

The Software Mirrors the Org Chart

Organizations which design systems (in the broad sense used here) are constrained to produce designs which are copies of the communication structures of these organizations.

Melvin Conway, How Do Committees Invent?

There’s a well-known idea in software engineering called Conway’s Law: organizations build systems that mirror their own communication structures. In the power sector, this observation is a clear reflection of the way that utilities where organized befote the unbundling efforts in the 90s. Generation planning departments, historically staffed by mechanical and chemical engineers, built tools focused on fuel economics and long-run energy balancing. Transmission planning departments, staffed by electrical engineers, built entirely separate power flow and short-circuit tools. These groups worked in silos even within vertically integrated utilities and then the separation got encoded in regulation like FERC order 888.

So what we have today is a collection of fragmented, non-interoperable tools that force planners and operators to spend enormous effort on data reconciliation rather than actual analysis. And now consider what happens when a hyperscaler shows up requesting 500 MW of firm power at a single substation. That request touches generation adequacy, transmission capacity, distribution interconnection, and potentially new gas or clean firm generation — all at once. No single legacy tool handles it, and the tools that handle pieces of it weren’t designed to talk to each other.

The Data Problem Comes First

The first fundamental issue is inconsistent data models. Each planning application uses different abstractions for the same physical components, and many embed simplifying assumptions directly into the data itself. When a production cost model and a power flow model define “max power” differently, moving data between them requires custom translation — and each translation is an opportunity for silent errors.

The fix starts with a schema-first design philosophy: define the data model before building applications, organize it around standardized schemas, and treat data as independent of any particular study or tool. Data should outlive models. When a better optimization engine or simulation tool comes along, the underlying dataset should remain valid and reusable.

This sounds straightforward, but it cuts against decades of practice. Most planning software optimizes its data structures for internal convenience, not for sharing. Getting this right means choosing the right level of abstraction — detailed enough to be useful across applications, but not so granular that adoption becomes impractical — and establishing a real data lifecycle: ingestion, validation, versioning, and archival as a continuous process, not an afterthought.

Not All Workflows Are the Same

Beyond data, integrated planning involves fundamentally different kinds of analytical workflows, and the software needs to support all of them.

Serial gate-clearing is the simplest pattern. You run a sequence of independent checks — thermal screening, voltage analysis, short-circuit study — and each stage produces a pass or fail. The tools don’t need to agree with each other; they just need access to the same scenario assumptions.

Sequential simulation is more demanding. Applications run in a coordinated sequence — production cost modeling followed by AC power flow, for instance — sharing results across domains and time steps. This requires rich data exchange and generates a real post-processing burden.

Convergence workflows are the hardest. Multiple applications iterate, exchanging data until they reach a consistent solution. Capacity expansion feeds resource adequacy, which feeds contingency analysis, which loops back to capacity expansion. These need robust error handling, lightweight data exchange, and orchestration layers that can manage iteration and tolerate partial failures. When you’re trying to evaluate whether a region can absorb three new datacenters while meeting reliability standards and emissions targets, this is the kind of workflow you actually need — and it’s the kind that today’s tools handle worst.

The key insight is that no single workflow design works for every institution or planning question. The software has to accommodate this diversity, which means APIs need to be flexible without baking in assumptions about how any particular analysis will be structured.

Repeatability Needs More Than Automation

One thing that comes up constantly in planning reform discussions is the need for repeatable analysis. And there’s a common misconception that repeatability just means automating what planners currently do by hand. But reality is that it doesn’t. Automating a brittle, hard-coded workflow just makes it break faster when requirements change — and requirements always change. I read somewhere that automating a broken process add no value to the enterprise. That’s the case here, automatic results exchange in CSV is a nightmare.

Real repeatability requires stable abstractions: extensible schemas that can accommodate new equipment types without invalidating existing data, versioned APIs with backward compatibility, and clean separation between workflow logic and interface contracts. When things change, the changes should be deliberate, documented, and traceable — not chaotic. This matters more than ever when load growth is no longer gradual and predictable — when a single datacenter interconnection request can reshape a region’s capacity needs overnight, and planners need to rerun and compare studies quickly across shifting assumptions.

Where This Is Heading

The future looks like a shift from collections of desktop tools to platform-based architectures. A planning platform would provide core services — data management, model validation, time-series processing, scenario management — while specialized analytical modules plug in through well-defined interfaces. The vision isn’t a monolithic vendor suite, it’s an ecosystem where adding a new capability means building a service that conforms to platform standards, not building an entirely new application with its own data pipeline. The future needs to look more like the App Store than Windows 1998.

A Word on AI

It’s tempting to look at this mess and think AI will sort it out. I have spoken with investors eager to fund nice interfaces with AI in it but with a 1960’s application under the hood. The reality, AI won’t save you — at least not in the way most people hope. Layering machine learning on top of fragmented tools and inconsistent data doesn’t fix the fragmentation; it inherits it. If the underlying data models are incoherent, an AI trained on their outputs will faithfully reproduce that incoherence, faster and at scale. There is no shortcut past the engineering.

The real opportunity for AI in planning — helping stakeholders explore trade-offs, identifying which uncertainties drive decisions, building fast surrogates for expensive simulations — only becomes possible once the data infrastructure, APIs, and workflows are sound. The foundation has to come first and it seems that this is where the no one is paying attention.

The Takeaway

Improving integrated planning isn’t about better models or faster solvers. It requires rethinking how planning software is designed, how data flows between applications, and how organizations collaborate around shared analytical infrastructure. Schema-first design, modular APIs, semantic versioning, purpose-oriented workflows — these are foundational practices that make cross-domain planning practically feasible rather than aspirational.

The fragmentation we live with today was not inevitable. It followed from how organizations were structured and how software was built to serve those structures. Changing the software means, at least in part, changing how we think about the work itself.