Why Power System Planning Software Is Broken

The tools used for integrated power system planning were built to work in isolation. Fixing them is not a computing problem — it's a software architecture problem with organizational roots.

Let’s get something out of the way first. I am wrong often. I have been wrong every year since 2008 (including 2026) thinking this is Ferrari’s year to win the F1 championship, and anyone with enough patience to go through my GitHub history will find plenty of evidence for wrong judgment. I am not particularly bothered by personal wrongness. I think it is part of the human condition to be wrong the way it is to catch a cold. Unpleasant, occasionally embarrassing, fundamentally unavoidable.

What keeps me up at night is something else: collective wrongness. Those ideas that outlive the individuals who first believed them and become zombie ideas, things that refuse to die and infect other ideas to keep themselves alive.

During a particularly bleak stretch of my PhD, the part where you’ve been staring at your own models long enough that you can no longer tell the difference between brilliant and stupid, and you’re increasingly unsure the difference matters, I read Chuck Klosterman’s But What If We Are Wrong? It is not a technical book. It is barely academic. It is the kind of book that disguises a genuinely unsettling philosophical hand grenade as a pop-culture meditation, and it fucked with me completely. The question it poses is simple to the point of cruelty: what does it feel like, from the inside, to be part of a collective that is categorically wrong about something? Wrong in the way that from scholarly apparatuses to every day culture can be arrayed in confident support of an idea that turns out to be incorrect.

In Being Wrong: Adventures in the Margin of Error, Kathryn Schulz makes an observation so obvious it should not be revelatory, and yet somehow is: being wrong feels exactly like being right. There is no warning label that distinguishes a confident true belief from a confident false one. You cannot feel an error as an error from inside your epistemic bubble.1

This is what Schulz calls naive realism: the intuitive, invisible assumption that the world is at it appears and when considering any question we must be rational to the point of dismissing any unverifiable data as proposterous and assume that all the information we currently have is all the information that will ever be available.

We have no idea what we don’t know, or what we’ll eventually learn, or what might be true despite our perpetual inability to comprehend what that truth is.

Chuck Klosterman, But what if we’re wrong?

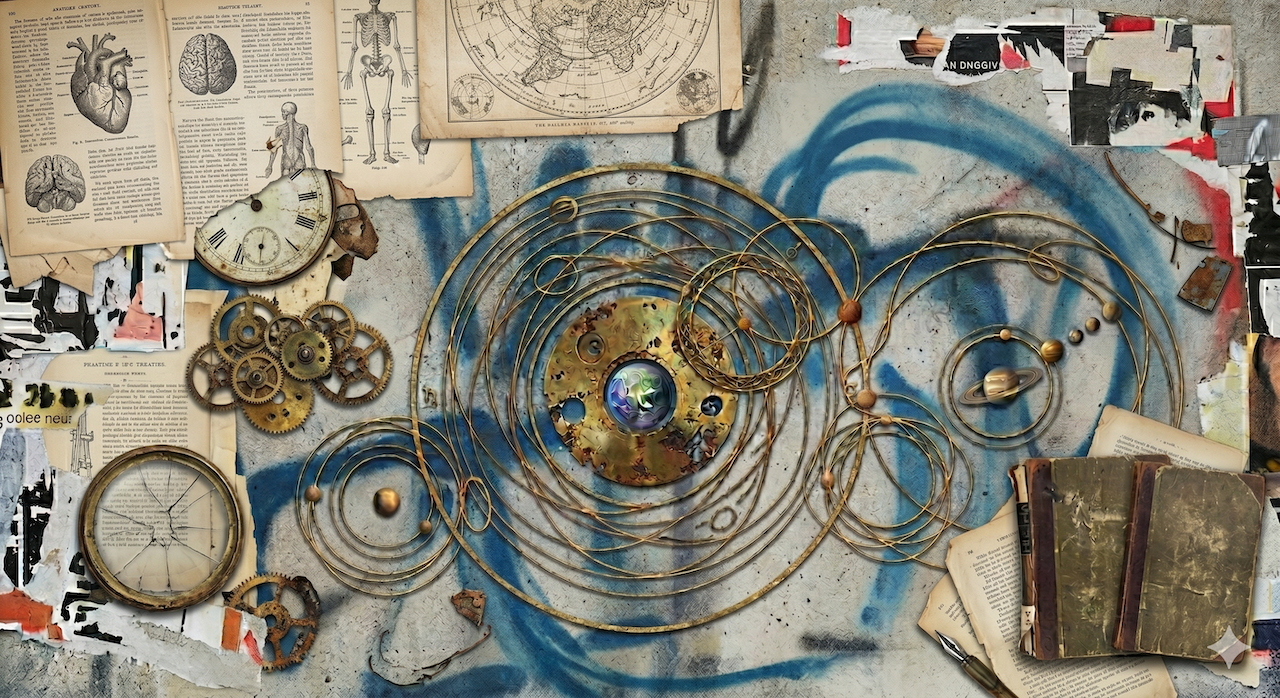

For roughly fourteen centuries, pretty much everyone believed the Sun moved around the Earth, from peasants to the most brilliant scientists. Klosterman made me think about something I’d never considered: Aristotle was wrong about almost everything in the natural sciences, and people believed him because of the vibes of his ideas. What I find most fascinating is that the centuries-old attempt to maintain the view that the Earth sat at the center of the universe led to the invention of an almost comically elaborate intellectual tower called the epicycle and deferent.

If a planet didn’t trace the expected orbit around the Earth, you added a small circular orbit on top of the big circular orbit, a circle riding on a circle. When that wasn’t enough, you added a deferent: a slightly off-center pivot point to account for the irregular speeds. Over time, the Ptolemaic system became a baroque clockwork of nested circles and offset pivots, each element motivated by a real observation of a real discrepancy. It was complicated, yes. But it was not arbitrary. And it worked, in the sense that it let astronomers predict where Mars would be on a given night with genuinely impressive accuracy.2

Here is where Schulz’s insight stops being anecdotal, and its consequences become real and structural. The Ptolemists were not experiencing a theory about the heavens. They were experiencing the heavens. Naive realism guaranteed that the geocentric cosmos wasn’t a hypothesis they held; it was the reality they inhabited. Each new epicycle didn’t feel like a patch on a failing model. It felt like a deeper understanding of how things actually are, which is exactly how it feels to be wrong in a way that will never reveal itself from the inside.

The alarming part is that the Ptolemaic model was not just wrong; it was wrong in a way that perpetually improved without ever becoming right, because epicycles converge toward the truth mathematically, in the limit as their number approaches infinity.3 Better and better tables of planetary positions. Closer and closer to accuracy. More and more confident. Never, ever discovering gravity, or ellipses for that matter. Which is, depending on your mood, either a beautiful instance of mathematical precision shining through bad metaphysics, or the single most depressing footnote in the history of science: a civilization can stumble into the new math for centuries, refine it relentlessly, and still completely miss the underlying physics. Being approximately right about the trajectory is not the same as being right about the inner workings of the universe.

It took roughly two thousand years and the burning at the stake of Giordano Bruno to begin dispelling the whole epicycle affair and even then, for Newton to finish the job he had to invent a new mathematical language, one that doesn’t arise naturally from the task of fitting circles to curves. The revolution that gave us everything from fluid dynamics to machine learning emerged from abandoning a framework that for two millennia felt right, and questioning that feeling could get you killed. Ptolemy’s system was so good at its task that it actively foreclosed pursuing alternative world views, and along the way helped justify the Catholic Inquisition.

Let’s say you’ve spent twenty years mastering epicycles. You know the geometry cold. You know which deferent to reach for when a new observation doesn’t fit. You are, by any reasonable standard, an expert, the kind of expert whose expertise is itself part of the problem. Now someone hands you Newton’s Principia.

The mathematics inside would look alien. There are no circles. There is no fitting procedure. There are instead bizarre notations describing quantities that are in the process of becoming something: infinitesimals, limits, derivatives, the whole unsettling apparatus of a calculus that makes your worldview and identity collapse.

That’s the subtle point Schulz would press on: your rejection would not feel like defending a theory. It would feel like correcting an error. Because naive realism doesn’t just make you believe your model is right, it makes you perceive challenges to it as departures from obvious reality. The Ptolemist reviewing Newton’s infinitesimals would not think: this contradicts my framework. He would think: this is simply wrong. The book is heresy, you and your friends from the monastery all agree that the consequences of these ideas are unacceptable and its time to get the torches ready to start the burning party.

Wrongness at scale doesn’t produce doubt, it produces consensus around wrong ideas. The consensus produces institutions and rules; and institutions produce incentives to maintain that consensus, which is where things get genuinely difficult.4

I work in energy systems modeling. I build and study the tools we use to understand how modern power grids work, and whether they will keep working as we fill them with solar panels, wind turbines, batteries, datacenters, the whole nine yards. After decades of intellectual stagnation we now need to ideate a system very different from the one coal and hydro plants dominated, and for which all our frameworks were designed. This work is deeply technical and riddled with hypothetical epicycles born in the middle of the 20th century, when power systems were the most interesting technology on Earth.

Let me describe two of them that I think really set back our field and limit the development of new ideas in energy modeling. This section is very critical, so reader be warned. Believe me, the critique comes from a place of love and care.

The first is ELCC. Effective Load Carrying Capability is a framework for measuring how much “capacity” a generator contributes to system reliability, that is, how much it helps ensure the lights stay on during the most stressful moments. It is not a bad idea. It was developed carefully, grounded in real observations about how synchronous thermal generators behave, and it produces numbers that regulators trust, that markets are built around, that entire planning processes depend on.

But as grids fill with wind, solar, and storage (resources whose availability is correlated, non-stationary, and deeply entangled with one another across geography and time), ELCC becomes something like a deferent with epicycles. You add weather-year conditioning. You adjust for marginal contributions. You add locational diversity corrections. Then we adapt this framework for DERs and EVs and design economic incentives around it. Each patch is motivated by a real observation of a real discrepancy that needs a new correction. The mental model becomes more complicated and intractable. And yet the fundamental question (what does “reliable capacity” even mean in a grid dominated by variable resources, storage, and demand that can flex?) is foreclosed by the framework before you can even start the conversation.

And then comes the move that is, in some ways, the most insidious of all. When the patches start straining the original framework past credibility, we wrap the whole apparatus in a Monte Carlo Stochastic simulation 5. We run ten thousand draws, report percentiles, if we are feeling fancy, compute conditional value-at-risk. We produce elegant probability distributions over loss-of-load expectation, which is itself already a statistical construct stacked on top of capacity contributions that are themselves derived from a framework we are no longer entirely sure describes anything real.

I have a name for this. I call it number washing: the use of simulations to manufacture certainty about processes we have, in fact, no information about. You don’t know what the joint distribution of wind, solar, demand, and storage state-of-charge will look like in the year 2035. Nobody does. The grid has never existed in the configuration we are about to put it in, the climate is no longer stationary in any useful sense, and the consumer behavior layered on top of all of it is being rewritten in real time by electrification and tariffs and habits that did not exist five years ago. We don’t have priors. All we have are vibes, dressed up as scientific priors, fed into a simulation engine that obediently produces ten thousand samples from a distribution we essentially made up, but which gives us that warm fuzzy feeling that we are right.6

The Monte Carlo doesn’t validate the model because it can’t. It just quantifies the uncertainty within the assumptions we already invented, which means that if the underlying distributions are wrong, the simulation gives you a very confident probability cloud around what is almost certainly the wrong answer.7 At no point does anyone ask the inconvenient question: has any of this ever been checked against the world? Are the loss-of-load distributions our models produce anywhere near the loss-of-load distributions our grids actually experience? Has anyone, in any rigorous empirical sense, demonstrated that ELCC works?

The answer, as far as I can tell, is no, we have decades of consistent practices and essentially no validation. “Something is better than nothing” is the usual cop-out when I ask the question. It is what you say when you’ve quietly accepted that things got out of control and the explanations are missing.

The second is EMT for everything. This one is a real frustration.

For most of the twentieth century, power systems engineers simplified electromagnetic transients (the fast, waveform-level oscillations that happen at the speed of the physics) as algebraic equations. This was a reasonable choice. The machines they were studying (synchronous generators, largely) were slow enough that the fast dynamics didn’t matter for the stability questions they cared about. The resulting framework, called RMS, or quasi-static phasor, or just “the way we do fast time-domain simulation”, was built for a world in which rotor stability was the dominant question. It is based on an elegant, computationally tractable theory, and embedded in every utility and every engineering curriculum.

Then we started replacing synchronous generators with inverter-based resources: solar inverters, wind turbines, grid-scale batteries. These devices switch at tens of kilohertz. Their control loops operate at timescales the RMS framework was explicitly designed to ignore. And so, predictably, the RMS models started failing in ways that were sometimes spectacular.8

The field’s response has been to demand full waveform EMT simulation for everything. And I understand the impulse: if your simplified model is failing, use the detailed one. EMT captures the actual waveform. It is, in some sense, closer to the physics. It is the Ptolemaic astronomer’s version of “just add more epicycles” and the community has embraced it with something approaching religious fervor.

The problem is that full waveform EMT is computationally brutal. Simulating a large grid at microsecond timesteps, for minutes of real time, is not just expensive; it is often intractable. And more importantly, it is not always necessary. In work I did with colleagues,9 we went back to first principles and asked a question that had somehow stopped being asked: which dynamics actually matter for which phenomena, and at what timescale? What we found was that the blanket demand for EMT was itself a kind of naive realism: the waveform looks like reality because it simulates the actual voltage shapes, and the community had forgotten that resolution is not the same as truth. You can get a very high-resolution photograph of the wrong thing.

Intermediate frameworks like dq0 can capture the relevant inverter dynamics at a fraction of the computational cost, without the pretense that you are simulating physics all the way down to the waveform. But these approaches look alien to someone trained to see EMT as the gold standard.

We have proposed extensions to this work for years now. The grant reviewer comments are, I promise you, comical and indistinguishable from a medieval astronomer reviewing Newton.10 Fortunately, in the 21st century, the consequence for being an apostate is no longer burning at the stake, just some online rage.

Because a post without mentioning AI is just too early 21st century and no one cares about the stone age, here is a thought experiment that I find genuinely unsettling.

Suppose you gave an AI system the task of making sense of planetary motion. But instead of starting from scratch, you trained it on fourteen centuries of Ptolemaic astronomy: the observations, the models, the refinements, the commentaries, the corrections. The model would be extraordinary at predicting planetary positions. Its benchmark performance would be excellent. It would ace every evaluation you could design.

But would it discover calculus? I have no idea, seriously. Dario Amodei, if you are reading this, can you answer the question, please? I read all my DMs and promise to respond.

However, I doubt it because the problem it has been trained to solve could be the wrong one if sufficient data points point in the wrong direction. The objective, predict where Mars is on a given night, was answered well enough by the existing machinery of the epicycles. There is no gradient pointing toward “invent a new branch of mathematics to explain why things move the way they do because we might be wrong about the entire order of the universe.”

My hypothesis, which could be another entry in the list of things I am wrong about, is that an AI trained on a corpus saturated with naive realism inherits that naive realism wholesale. The texts it learns from don’t say “here is our current best model of reliability, and it might be wrong.” They say “here is how reliability studies are conducted.” The distinction is invisible in the training data. The model doesn’t just learn the epicycles; it learns to treat the epicycles as the mechanism that interprets reality. An AI in the 16th century would have drowned out Copernicus and saved Giordano Bruno’s life by gently informing him that his cosmological ideas were probably just a hallucination.

And there is something even more troubling underneath this. The way we are building and deploying these systems carries an implicit assumption so grand that we never bother to state it: that we have essentially arrived. That the sum of digitized human text (the papers, the textbooks, the standards documents, the Stack Overflow threads) represents not a snapshot of knowledge at a particular moment in history, but something close to its destination.11 We are encoding current consensus as though it couldn’t possibly be terminally incorrect. It makes me think the next big model should be called Aristotle, but that is probably bad product naming, though better than Grok.

This is not a small problem. It is the premise Klosterman’s entire book was written against, long before this whole AI craze. It traces to the deepest architecture of human cognition: we don’t like to change our minds, which is almost as true as the fact that we will get things wrong.

We are building extraordinarily fluent systems and benchmarking them against outdated questions, then expecting new solutions. An AI trained on the full corpus of ELCC analysis will produce more ELCC analysis: better-calibrated, faster, more scenarios, nicer plots. An AI trained on decades of EMT literature will produce more arguments for EMT, without ever pushing back and saying, “do you actually need this?” Neither system will ask whether the framework should be abandoned. That question doesn’t appear in the training distribution. It is up to us, the actual human intelligence, to devise the new questions and the new answers, the way Newton did.

I want to end by asking you something that will feel uncomfortable. I was raised Catholic, so suffering is my self-care love language. But if you do this, you should reward yourself with something nice afterwards.

What are the epicycles in your professional toolkit? The methods you reach for automatically, so established that questioning them reads as incompetence rather than intellectual honesty. Not the obviously wrong ideas, but the ones that are almost right. The ones that generate your best results and your most confident responses, and that may therefore be your biggest blind spot.

The Ptolemists were not failing. They were succeeding, by every metric available to them like sea navigation. The framework you should most urgently question may be the one generating your strongest results right now, the one you tell yourself is “better than nothing.” Plotemic tables were good enough for ships in the sea but not good to send probes into deep space or for the GPS system to work.

We can’t tell which ideas are wrong for sure until the present has become the past. In the meantime, while we keep living in the present, the best we can do is undertake the intellectual effort to look inwards. Without that introspection, we are hopelessly positioned to try to identify our collective mistakes. Or, as the old adage says, “you can’t read the label from inside the bottle.”

What actually keeps me sleepless (and what I hope will keep you sleepless too from now on, unless I really am the only neurotic person thinking about the epistemology of power systems modeling) is the possibility that the wrongness compounds. That collective investment in a framework creates institutional gravity so strong it bends careers, engineering practices, and professional relationships around it, until abandoning the framework is not just intellectually difficult but professionally suicidal.

There is no simple solution to this, and I won’t pretend to offer one. But I think the first move is just: noticing. Noticing when your criticism of a new idea sounds less like “here is what the evidence shows” and more like “this is obviously not how it works.” That feeling of obvious-ness is worth interrogating. It is how I imagine the Ptolemists could have felt about infinitesimals, and that is when I pause and ask myself, But what if we’re wrong?

Schulz is precise about something that matters here: the problem is not that we feel certain when we should feel uncertain. Certainty and uncertainty are distinguishable feelings. Right and wrong are not. You can feel genuinely uncertain about a true belief and completely confident about a false one. The emotional signal tracks your epistemic state, how much you feel you know, not the actual relationship between your belief and the world. ↩

The accuracy was real enough that Ptolemaic tables remained useful for navigation well into the early modern period, after Copernicus. Being wrong about the mechanism and being useful for the task are not mutually exclusive, which is the part that should make you most uncomfortable. ↩

Here is the cosmic punchline: epicycles are, in the limit to infinity, mathematically correct, you can approximate any periodic motion to arbitrary precision thanks to the Fourier series. The astronomers were essentially doing harmonic analysis by hand for roughly fifteen hundred years before Joseph Fourier showed up in 1822 to formally explain why the whole baroque mess had been “working.” ↩

This is the amplification that Schulz gestures toward but doesn’t fully develop. Naive realism shared across a community produces institutions: funding criteria, hiring committees, peer review expectations, graduate curricula, all organized around a shared map that no one recognizes as a map. At this point the wrongness is not just sticky it becomes part of the culture. ↩

I know Monte Carlo Stochastic is redundant. I am not trying to be technical but to emphasize the point that eventually the whole terminology doesn’t mean anything anymore. ↩

This is the part that I think gets lost in the polite language of “scenario analysis” and “probabilistic planning.” When you genuinely don’t know the distribution of an input, sampling from a distribution you invented does not give you information about the system. It gives you information about your invention. Those are extremely different things, and a Monte Carlo simulation cannot tell them apart, because to the simulation, your invented distribution is reality. It cheerfully samples from it and reports back. The output looks just like the output you would get from a real distribution, which is, I would argue, the entire problem. ↩

There is a deep cultural confusion here between uncertainty quantification and model validation. They sound similar. They are not the same thing. Uncertainty quantification tells you how much wiggle room your model has given its own assumptions. Validation tells you whether the model corresponds to anything outside itself. A model can be exquisitely uncertainty-quantified and completely wrong about the world, and our field has, for reasons I find genuinely fascinating and slightly depressing, decided that the first thing is more or less an acceptable substitute for the second. ↩

“Spectacular” is a euphemism. There were real grid incidents in which inverter-based resources interacted with each other and with the network in ways that the phasor-domain models had, by design, no capacity to capture. The models didn’t just fail quietly. They failed in ways that surprised people who had trusted them for decades. ↩

Lara, J.D., Henriquez-Auba, R., Ramasubramanian, D., Dhople, S., Callaway, D.S., and Sanders, S. “Revisiting Power Systems Time-Domain Simulation Methods and Models.” IEEE Transactions on Power Systems, 2023. https://arxiv.org/abs/2301.10043. A paper that I am not sure changed any minds but at least asked the question out loud, which felt necessary. ↩

Not that I consider myself Newton, but we once received feedback in a proposal from the now-defunct Solar Office explicitly stating “This proposal may be ahead of its time.” ↩

Yes, real work is happening on getting models to express uncertainty and acknowledge the boundaries of their training. The architecture is still built on the claim that the corpus is the truth, and the more fluent the model becomes at speaking the corpus, the more thoroughly it inherits whatever the corpus was confidently wrong about. Capability and inherited error are not opposing forces. ↩